What’s Your Number? Why Your Engineers Need Better Metrics

Adam Cohen is the cofounder of @Weave , an engineering analytics company that helps teams go AI-first. Before Weave, he was head of operations and sales at education software firm Top Hat and VP of operations and revenue at @Causal , which was acquired by Lucanet in 2024.

In sales, you always know exactly where you stand. In engineering, you don’t.

The sales leaderboard shows the top performers, the basement-dwellers, and everyone in between. At first, this is terrifying, but it quickly becomes inspiring. If you know who’s outselling you, you can reverse-engineer a way to improve. At Top Hat, I built a dashboard that showed every sales rep their conversion rates. Armed with this data, they practically started coaching themselves.

Now, tools like Gong have made it easy for salespeople anywhere to identify ways to improve. Gong pulls data from multiple sources, measures performance through metrics known to move the needle, then provides teams with actionable data.

But as I shifted to running customer success and marketing, there was nothing. No leaderboard, no analytics, no tools like Gong. Without clear and meaningful metrics, career and company development became guesswork.

I was talking about this with (my now-cofounder) Andrew, a founding engineer at Causal while I was VP of operations and revenue there. I mentioned I was trying to cobble together something like Gong for customer success using ChatGPT and other tools. That's when we both had the thought: "Wait, why don't engineers have anything like this?"

They’re building the product in the dark, with few good tools for measuring impact, even with seemingly basic things like ship rate. They don’t know what’s attributable to difficult code fixes, what comes down to individual speed, and where team communication is costing them time.

The vision became clear: give engineers the same self-improvement capabilities that transformed sales teams.

The Version No One Would Pay For

Our first version was essentially a summarization tool that lived on Slack. We integrated with Slack, Git, and Jira, then provided a rundown: "Here's what's happening, here's what went well, here's who needs support." We even tried to predict burnout by analyzing work trends, like when people were working. (The verdict: too creepy and didn’t add enough value.)

The feedback from engineering leaders was consistent: "Yeah, it could be helpful, but it feels like noise. I already know what's going on. I need help actually doing something about it."

So we started making suggestions—vague insights like:

"Your PR is too large. Break it down."

"You're context-switching too much."

We signed $70,000 in LOIs, but when it came time to convert those into actual contracts, nobody would pay.

They wanted proof: “How do you know this will help us ship faster?”

After many interactions like this, our blind confidence morphed into fear. Maybe they were right. Here I was, a new founder, telling engineering leaders I could measure something they believed was impossible. Of course they were skeptical; I wouldn't have believed me either.

Showing, Not Selling

Instead of arguing with my prospective clients, I dug into my sales experience. We needed to reverse-engineer our way up the leaderboard. And the quickest way to do that was by finding the metrics that mattered.

The constant demand to "prove ROI" made us realize we had it backwards. You can't provide effective suggestions without getting accurate measurements first. What makes a PR “too large”? When is the context-switching “too much”? You can’t improve something you can’t quantify.

So, we created "Weave Hours." Think of them as expert engineer hours. We scan every PR and determine how long it should take an expert engineer (i.e., 5+ years at a top tech company, deeply familiar with the codebase) to complete.

This gives us a standardized measure of actual work output, not just activity or time spent.

Once we had that, we could start moving from productivity platitudes to precision coaching at scale. Instead of generic advice, we started providing hyper-specific interventions:

“Your Cursor rules file is producing an 18% lower acceptance rate than average. Optimize by doing this.”

"This collaboration pattern reduces your output by 40%. Try this approach instead."

To prove it worked, we built proof into our sales process. Now, before every sale, we connect a prospective customer’s actual data and walk through their recent PRs together. The "wow" moment happens when they see our model accurately assess something complex—things like understanding why a seemingly small bug fix was actually incredibly difficult or recognizing the true scope of a refactoring effort.

Now that engineers see their own work reflected accurately in the data, they realize this isn't just another monitoring tool. Because it understands their craft, it can give insights that actually improve it.

Why It Matters

Because of AI, we're witnessing the most significant productivity shift in software engineering history.

That’s what the hype says, at least.

But our data quantifiably proves it. Since January, we’ve seen a 16% average increase in productivity across all companies we measure. Some teams have hit 3x productivity gains.

Hybrid human-AI teams are emerging, but most organizations have no idea how to optimize these new workflows. They're flying blind into an era where every engineer will soon manage a team of AI agents, coordinate complex human-AI workflows, and orchestrate systems of unprecedented complexity. Traditional management approaches will completely break down.

They need to be able to do what we did at Top Hat: coach themselves. To do that, engineers need to know things like:

Which agents perform best for different tasks

The true cost-benefit of various AI tools

How to maximize human-AI collaboration

The companies that master this first will have an insurmountable competitive advantage. The ones that don't won’t be on the leaderboard.

Replies

Product Hunt

Love this thread. And I have really enjoyed using @Weave at Product Hunt. This is probably a very common thought, but I'll raise it anyways. From the perspective of an engineer: sales has clear outcomes. Was the contract signed? How big was the contract? Did the customer renew next year.

Engineering is much more nuanced. Defining a successful PR is tricky. Is code churn bad? Maybe... It could be that a very experimental feature was shipped, we learned a ton from it and are now iterating on the feature, throwing out a bunch of experimental code.

I am loving Weave's abstractions of Weave hours, AI Code %, Review quality, and Innovation ration. They feel directionally correct. But I am having a hard time fully trusting these metrics since they are a black box to me. And, as an engineer, if I don't understand something, I don't fully trust it.

Weave

So useful for getting a good gauge on how you are doing and where to improve.

Purposeful Poop

this seems (reasonably) pointed at teams. is there a way for an individual to use this?

applies to job -- "here is a report that shows how i am a 10x developer"

is there a path to something like that?

i'm generally skeptical of this, but it's also very clear that some engineers are stronger than others. it feels like a bit of a cat and mouse game trying to win against goodharts law. even cases where the goals appear very clear like sales seem like they can be way more nuanced -- having someone sell a ton of deals that have bad terms, overall bad direction (quick hits vs sustainable customers), blah blah.

im sure you hear this line of thinking every day, so you probably have a pretty developed thought on the take, which is what im interested in.

thanks for posting. interesting fodder for thought.

Weave

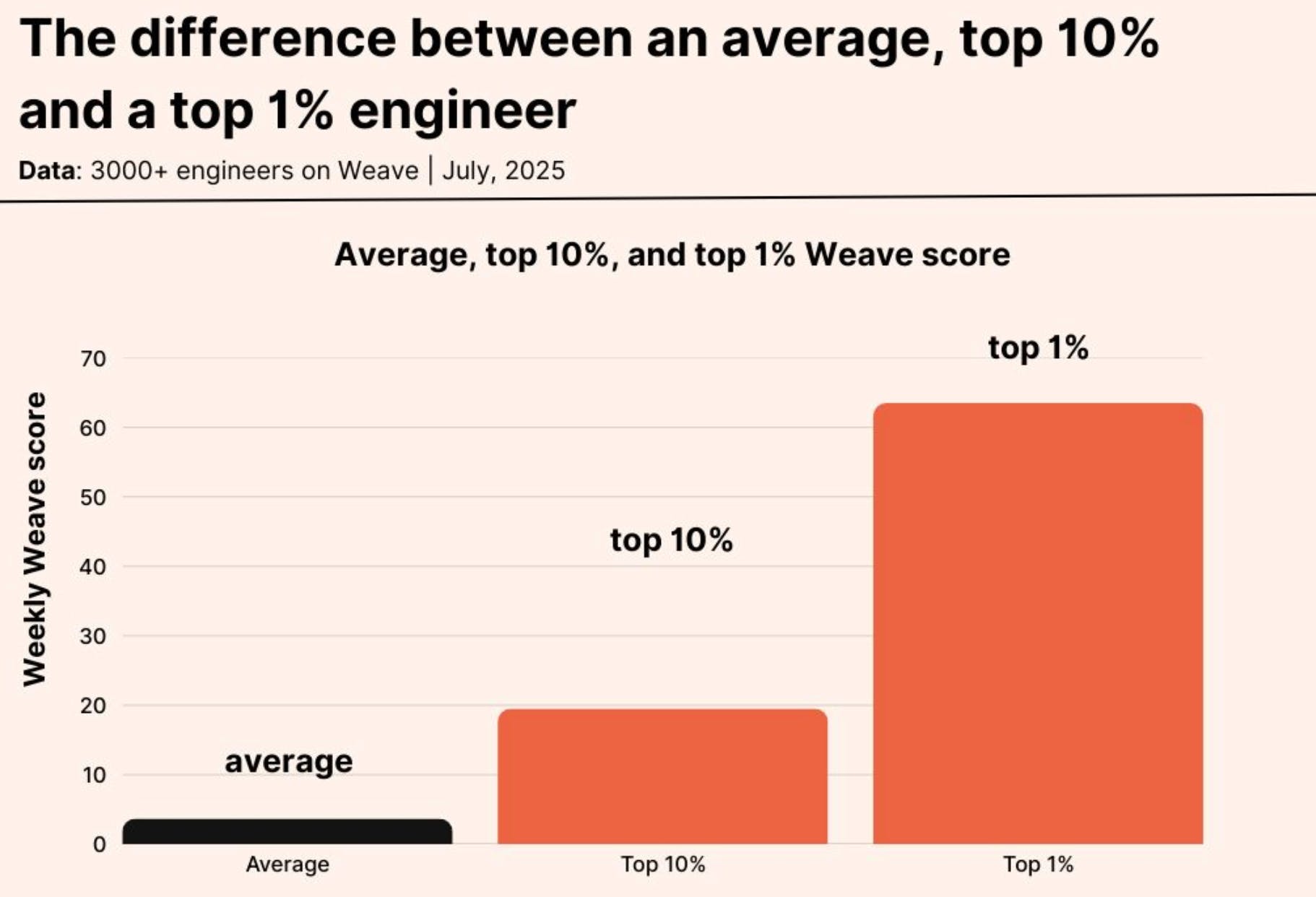

@catt_marroll Yes would like to get here. We want to be able to support engineers as much as possible. Right now we've created a Linkedin badge (can see the images) or check out Steven here: https://github.com/steventohme. If you press the link it'll take you to his latest code output, you can see he hit top 1% recently, which proves he's pretty cracked.

You can connect weave to personal repos and display it on but unless your manager grants access to your work codebase you'll miss a significant amount of your output. We don't know how to get around this one just yet.